What «data-privacy compliant» actually means for AI

Many AI providers advertise GDPR compliance or EU server locations. That sounds reassuring — and still falls short. Data privacy for AI depends on three factors: server location, the legal entity of the provider, and the contractual basis.

A server location in Frankfurt or Geneva matters little when the provider is a US company. US companies are subject to the CLOUD Act — regardless of where their servers are located. This applies equally to OpenAI, Microsoft (Azure), Google (GCP), and Amazon (AWS).

The decisive question is therefore not «where is the server?» — but rather: «Which law governs the company processing my data?»

The AI chatbot as a concrete example

A website chatbot is a good example because it brings the data privacy questions into sharp focus. Every conversation flows through an AI inference API — and in doing so, potentially collects personal data: names, email addresses, concerns, purchase intentions.

Most AI gateways on the market — including those with EU branding — are US companies. This means: even if the server is in Frankfurt, US law governs the company operating it. The CLOUD Act applies.

What initially looks like a purely technical problem is in reality a legal question. And it can be solved — with the right provider choice. How a data-privacy compliant AI chatbot is concretely built is covered in a separate article.

SaaS or custom? When each solution makes sense and how appointment booking works directly in chat.

The CLOUD Act — the underestimated risk

The CLOUD Act (Clarifying Lawful Overseas Use of Data Act) is a US federal law from 2018. It empowers US law enforcement to demand data access from US companies — regardless of where the data is stored.

What this means in practice: a US company that stores data on EU servers must still provide access to a US authority. It is not required to inform you or the affected individuals about this.

This is not a hypothetical scenario. US authorities actively use this capability. For Swiss SMEs with data protection obligations toward customers, employees, or authorities, this is a real legal risk — even if it rarely becomes visible in everyday operations.

A Data Processing Agreement (DPA) with a US provider reduces your compliance risk on paper. But it does not protect against government US data access. The DPA governs what the provider may do with your data — not what it must do on US government instruction.

Does an EU subsidiary protect against the CLOUD Act?

A common counterargument: Microsoft operates Microsoft Ireland, Google has Google Ireland Limited, Amazon runs Amazon EU SARL. Aren't these European companies under European law?

No — not under the CLOUD Act. The law explicitly applies to US companies and all entities they control. «Control» means the parent can direct the subsidiary. That is definitionally true of subsidiaries.

A US authority obtains an order against Microsoft Corp — not Microsoft Ireland. Microsoft Corp then directs Microsoft Ireland to produce the data. The parent has both the legal obligation and the technical means to enforce compliance. Failing to do so risks contempt of court. Server location and the EU registration of the subsidiary change nothing about this logic.

Can the EU subsidiary refuse US data access?

Theoretically yes — and here lies a genuine, unresolved legal conflict. GDPR Art. 48 states that data transfers to third countries based on foreign government orders must go through MLAT channels or recognised agreements — not direct compulsion. An EU subsidiary therefore has a legal basis to refuse.

Three reasons why this provides no safe harbour in practice:

The US court compels the parent — not the subsidiary. The order is directed at Microsoft Corp. The parent bears the obligation to comply and the risk of refusal.

There is no EU-US CLOUD Act agreement. The law includes a «qualifying foreign government» clause: if a country has signed a bilateral CLOUD Act agreement with the US, providers can challenge orders that conflict with that country's law. The UK signed such an agreement in 2022. The EU has not. Without this agreement, there is no formalised path to block US orders.

The conflict falls back on the company. Complying with the CLOUD Act violates GDPR Art. 48. Refusing risks contempt of court for the parent. Microsoft and AWS attempt to create «EU Sovereign Cloud» architectures that technically prevent US staff from accessing EU data. No court has recognised this as a legal barrier.

Data residency ≠ data sovereignty. «Data residency» refers to where data is physically stored. «Data sovereignty» refers to who ultimately holds legal control. EU subsidiaries guarantee the former. Only the absence of a US parent company in the control chain eliminates the CLOUD Act.

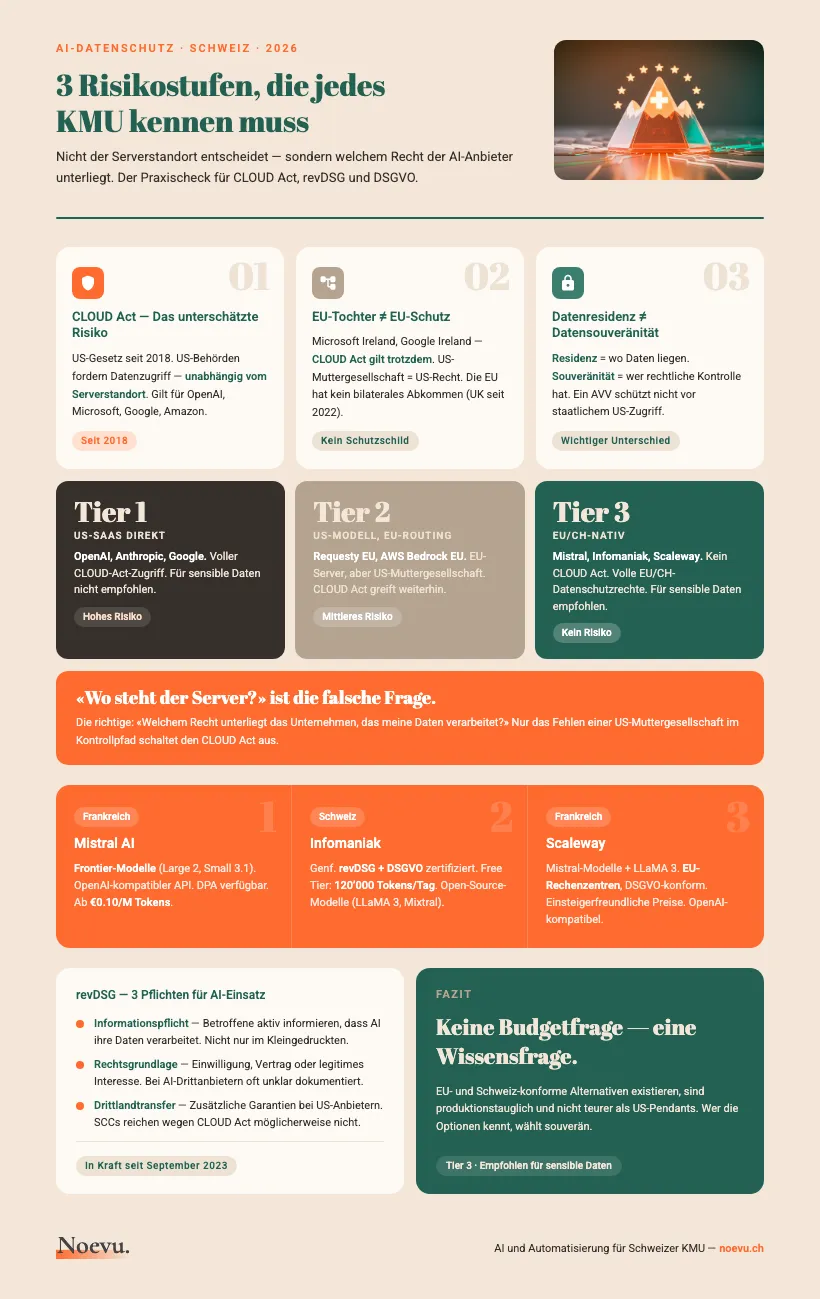

Three risk tiers at a glance

Not every AI solution carries the same risk. A three-tier framework helps with decision-making — depending on what data you process and what compliance requirements apply.

The classification is based on two criteria: legal entity of the provider (US or EU/CH) and data location. Both factors affect CLOUD Act risk and revDSG compliance.

| Tier 1: US SaaS direct | Tier 2: US model, EU routing | Tier 3: EU/CH native | |

|---|---|---|---|

| Server location | USA | EU (varies) | EU/Switzerland |

| Legal entity | US company | US company | EU/CH company |

| CLOUD Act risk | High | Medium | None |

| Known examples | OpenAI, Anthropic, Google | Requesty EU, AWS Bedrock EU | Mistral, Infomaniak, Scaleway |

| DPA available | Yes | Yes | Yes |

| For sensitive data | Not recommended | With limitations | Recommended |

Tier 2 also includes white-label products like EUrouter (technically identical to Requesty, US parent company). Legal classification as of April 2026.

Tier 1: US SaaS direct — when is this acceptable?

Direct US providers like OpenAI, Anthropic, or Google are not prohibited per se. What matters is which data you process.

Acceptable for: non-personal data (publicly available information, generic texts), internal use without personal data, or one-time tests without data persistence.

Not acceptable for: customer data (names, email, purchase history), employee data (applications, salary information), health or financial data, and confidential documents with business secrets.

Many SMEs use ChatGPT daily — often for tasks where Tier 1 is actually problematic. The risk is real, even if it rarely becomes immediately apparent.

OpenAI offers enterprise customers an EU data residency option. The data then stays on EU servers — but the company remains a US company. The CLOUD Act still applies. EU data storage slightly reduces the risk without eliminating it.

Tier 2: US models with EU routing

Tier 2 providers are EU-based gateways or cloud platforms that make US models available through European infrastructure. This sounds like the best of both worlds — but has a structural catch.

Requesty EU, for example, routes requests through a Frankfurt node (AWS eu-central-1). This reduces latency and maintains data residency in the EU. But the company behind Requesty is incorporated in the US. The CLOUD Act still applies.

AWS Bedrock with EU inference profiles (eu-central-1, eu-west-1) works similarly: Claude or Titan run on European servers — but Amazon remains a US company. The CLOUD Act risk persists.

EUrouter — also positioned as an EU alternative — is a white-label product of Requesty. Legally identical, despite the EU branding.

Tier 2 is a pragmatic middle ground. Often sufficient for non-sensitive data and moderate compliance requirements. Anyone processing special categories of data under revDSG should choose Tier 3.

Tier 3: EU- and Swiss-native solutions

Tier 3 providers are legally incorporated in the EU or Switzerland and operate their infrastructure there. The CLOUD Act does not apply. EU/CH data protection law applies in full. This makes them the safest option for personal and sensitive data.

The following providers are relevant for Swiss SMEs — with different strengths depending on the use case:

Mistral AI — France

Infomaniak AI Tools — Switzerland

Scaleway Generative APIs — France

Apertus — Switzerland (in development)

Checklist: What to clarify before deploying AI

Before you deploy an AI service in production, fundamental questions should be clarified. This applies regardless of the provider — revDSG and GDPR require demonstrable decisions, not good intentions. A brief consultation helps find the right starting point.

Before you start

What revDSG concretely requires from you

Switzerland's revised Data Protection Act (revDSG), in force since September 2023, places concrete requirements on AI use. Three of them are particularly relevant for Swiss SMEs.

Information obligation. If you process personal data with AI, data subjects must be informed — actively and comprehensibly, not just in the fine print of your privacy policy.

Legal basis. Every processing operation requires a basis: consent, contract, or legitimate interest. With AI systems this is often poorly documented — especially when data flows to third-party providers.

Third-country transfer. If data is transferred to countries without equivalent protection (USA), additional safeguards are required. Standard Contractual Clauses (SCCs) are one option — but may not be sufficient for US providers due to the CLOUD Act. The Federal Data Protection Commissioner (FDPIC) has made clear: revDSG is enforced with the same instruments as GDPR in the EU — including fines.

Start with the processing register. Document which AI tools you use, what data flows, and on what legal basis. This is not a bureaucratic exercise — it is the foundation on which you can demonstrate, when it matters, that you made a careful decision. One hour of effort saves many hours of explanation to the FDPIC.

Infographic: 3 Risk Tiers for AI Data Privacy

Thank you. Your download will start shortly.

Conclusion: Compliance is not a certificate statement

«GDPR-compliant» and «EU server» on a vendor dashboard mean little without examining the CLOUD Act. The decisive question is: which law governs the company — not: where is the server.

For Swiss SMEs without a dedicated legal department: Tier 3 is the safe choice for sensitive data. Mistral (France) offers frontier models without CLOUD Act risk. Infomaniak (Switzerland) offers maximum local control for standard tasks.

For many everyday applications — draft texts, internal summaries, research without customer data — Tier 1 is pragmatically defensible as long as no personal data is involved.

The good news: EU- and Swiss-compliant alternatives exist, are production-ready, and are no more expensive than their US counterparts. The decision is not a budget question — it is a knowledge question. Whoever knows the options can choose confidently. And anyone who needs support with AI integration can find it without compromising on data privacy.

Which provider fits your setup and what exactly you need to document — that can be clarified in a short conversation. No jargon, tailored to your situation.